One of the most perplexing issues in technical SEO is when you have a page that is perfectly indexable, but still not indexed by Google. You’ve checked your robots.txt, there’s no ‘noindex’ tag, and the page returns a 200 OK status code. Yet, it remains invisible in the search results. This is a clear signal from Google that, despite being technically accessible, it does not consider the page valuable enough to include in its index.

This is different from a simple crawling error. It’s a quality judgment. Google has visited the page (or knows of its existence) and has made an editorial decision to exclude it. Understanding why is crucial for fixing the root cause and ensuring your important content makes the cut. For a broader look at this topic, see our main guide on the indexability category.

Diagnosing the ‘Why’: Common Reasons for Exclusion

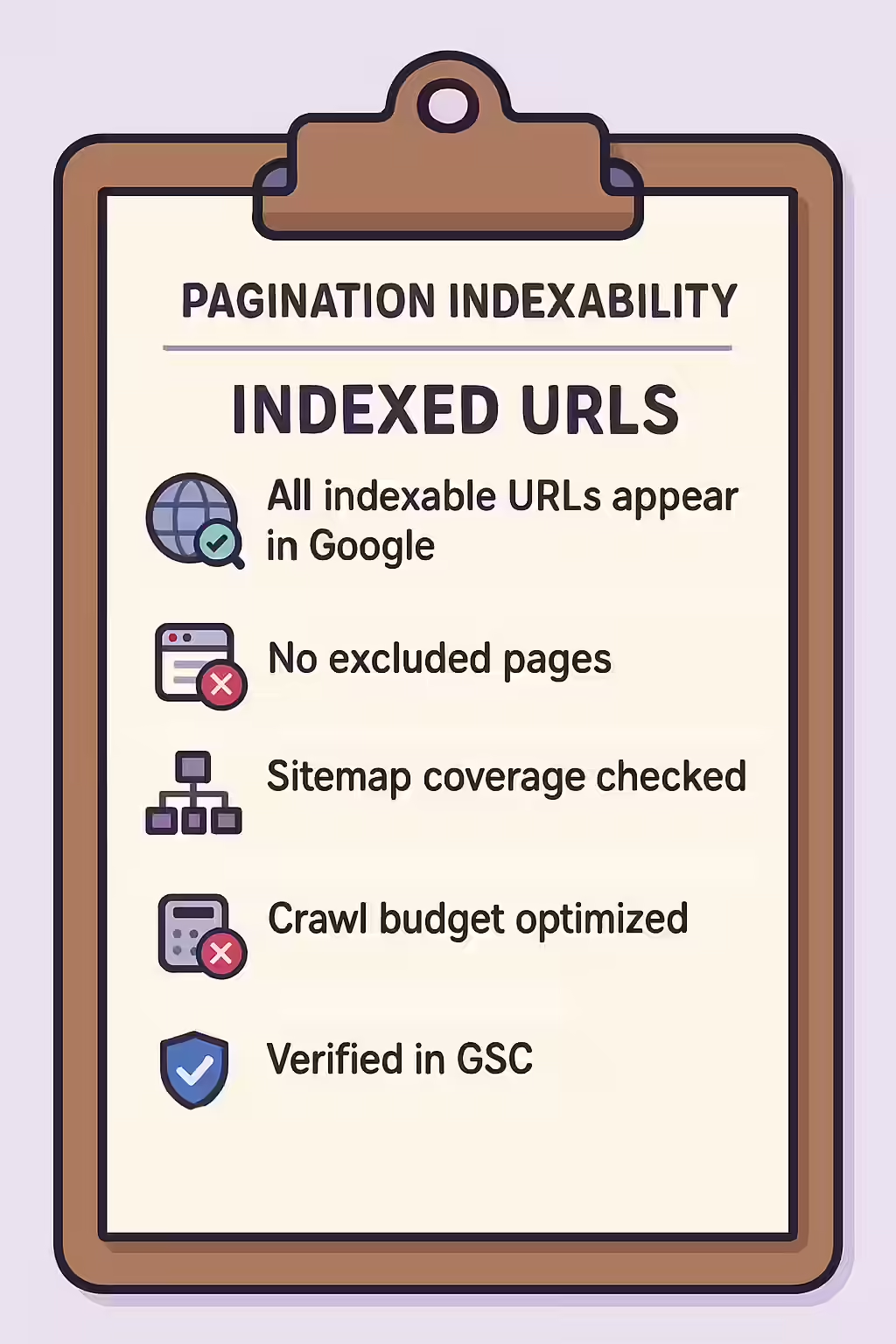

When a page is technically sound but not indexed, the problem usually falls into one of three categories. The Index Coverage report in Google Search Console is your primary tool for diagnosing these issues.

- Low-Quality or Thin Content: The page may have very little unique text, or the content may be generic, unhelpful, or substantially similar to other pages on your site (see near-duplicates).

- Poor Internal Linking: The page may be an orphan page with few or no internal links pointing to it. This signals to Google that the page is not important within your own site’s hierarchy.

- Crawl Budget Issues: If your site is very large, Google may not have the resources to crawl and index every single page. It will prioritize the pages it deems most important, often leaving deeper, less-linked pages behind.

A Step-by-Step Guide to Getting Your Pages Indexed

Fixing this issue requires improving the quality and authority signals for the affected page. For a deep dive into content quality, this guide from Ahrefs on getting your site indexed is an excellent resource.

- Start with Google Search Console: Use the URL Inspection tool on the specific URL. This will tell you its current status and often provide a reason for its exclusion (e.g., “Crawled – currently not indexed”).

- Enhance the Content: Substantially improve the page’s content. Add more unique text, helpful details, images, and data. Ensure it provides real value to a user and is superior to competing pages.

- Improve Internal Linking: Add at least 3-5 high-quality internal links from relevant, authoritative pages on your site to the page you want indexed. This tells Google that you consider the page to be important.

- Request Re-indexing: After improving the content and internal linking, use the “Request Indexing” button in the URL Inspection tool to push the page into a high-priority crawl queue.

Frequently Asked Questions

What’s the difference between ‘Discovered – currently not indexed’ and ‘Crawled – currently not indexed’?

‘Discovered’ means Google knows the URL exists but hasn’t crawled it yet, often due to perceived low importance or crawl budget issues. ‘Crawled’ is more serious; it means Google has visited the page but decided it wasn’t valuable enough to add to the index, pointing to a content quality problem.

Does submitting a sitemap guarantee indexing?

No. A sitemap is a strong suggestion to Google that you consider a page important, but it does not guarantee indexing. If the page is low-quality or has no internal links, Google may still choose to exclude it from the index.

Can I force Google to index my page?

You can’t force it, but you can strongly request it. The best way is to use the ‘Request Indexing’ feature in Google Search Console’s URL Inspection tool. This pushes the URL into a high-priority crawl queue. However, if there’s an underlying quality issue, Google may still choose not to index it.

Are your best pages invisible? Start your Creeper audit today to uncover and fix your indexing issues.