In JavaScript-heavy websites, it’s crucial that the signals you send to search engines are consistent before and after rendering. A serious conflict occurs when a `noindex` directive is present in the initial HTML but is then removed by JavaScript. This creates a state of ambiguity for Google. Its crawler sees a ‘noindex’ tag in the first wave of indexing, but the tag is gone when the page is fully rendered in the second wave. This contradiction can lead to unpredictable indexing behavior and signals a technically unhealthy site.

Think of it as sending a letter to the post office with a “Return to Sender” sticker, only to have a different messenger intercept it and remove the sticker before it’s processed. The post office is left with conflicting instructions, and the final destination of your letter is uncertain. For a broader look at JavaScript-related issues, see our guide on the on-page SEO category.

HTML vs. Rendered DOM: The Source of the Conflict

To understand this issue, you need to know how Google processes pages. As detailed in their guide to JavaScript SEO basics, it’s a two-wave process:

- Crawling (Wave 1): Googlebot fetches the raw HTML file from your server. At this stage, it sees the “ tag and may initially de-index the page.

- Rendering (Wave 2): Later, Google renders the page to execute JavaScript. If a script removes the `noindex` tag, the final rendered HTML (or DOM) no longer contains the directive.

This conflict between the two versions is the core of the problem. Google is left with contradictory information about whether you want the page indexed, which can lead to it being dropped from or unexpectedly added to the index.

How to Synchronize Your HTML and JavaScript Directives

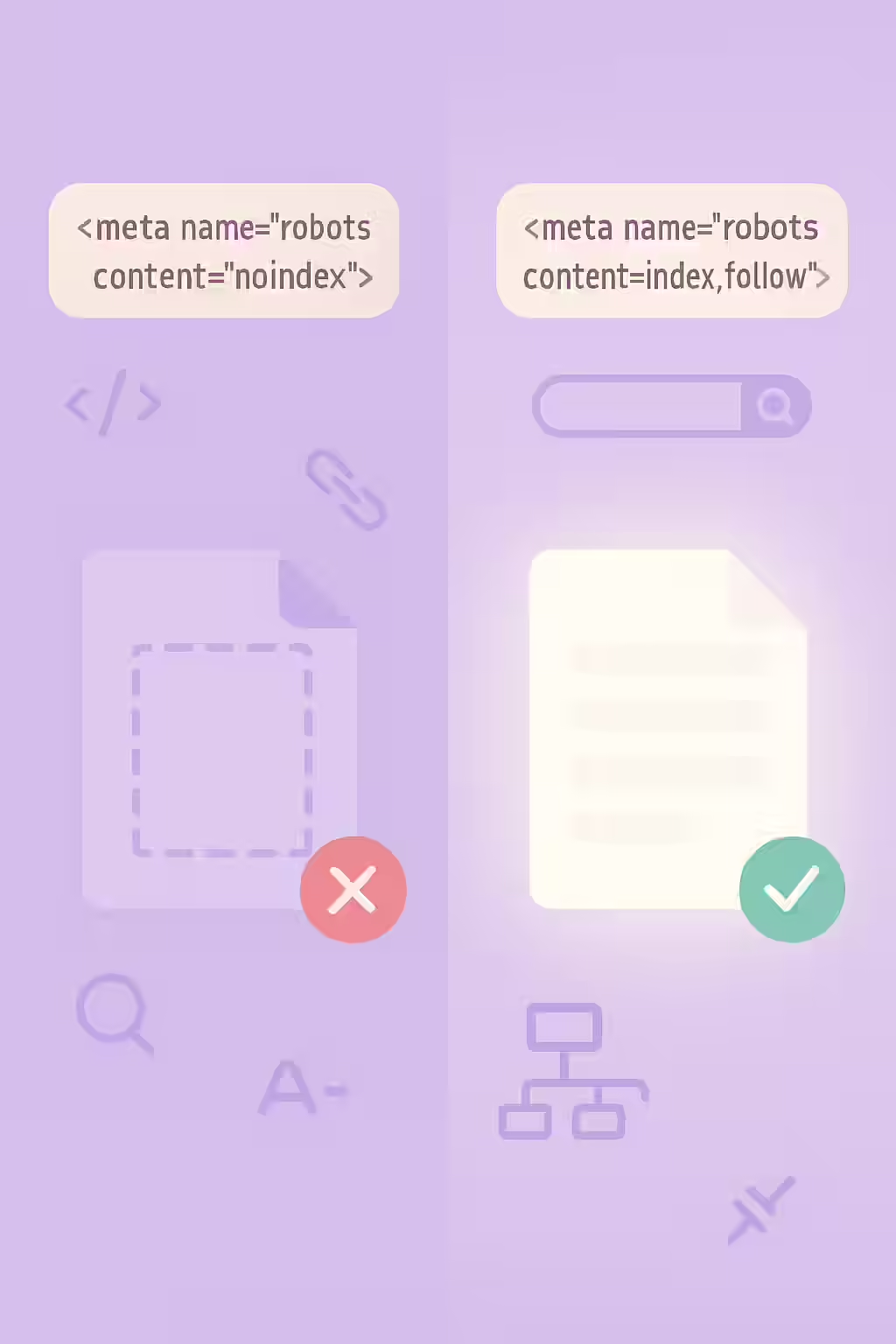

The goal is to ensure the state of your indexing directives is consistent in both the raw and rendered HTML. This provides clear, unambiguous instructions to search engines. For a deep dive into this topic, this guide from Moz on JavaScript SEO is an excellent resource.

Example: Ensuring a Consistent `noindex`

<!-- Before: JS removes the noindex tag --> <head> <meta name="robots" content="noindex"> <script> // Some script that removes the meta tag </script> </head> <!-- After: The noindex tag is consistently present (or absent) --> <head> <meta name="robots" content="noindex"> </head>For more on this topic, see our guide on noindex and nofollow.

Frequently Asked Questions

What if JavaScript *adds* a ‘noindex’ tag instead of removing it?

This is a more common scenario, often used to ‘noindex’ thin content pages like search results after they are rendered. However, it still creates a conflict. The best practice is consistency. If a page should be noindexed, the directive should be present in the initial HTML to avoid any ambiguity for search engines.

Does this apply to other meta tags?

Yes. Any modification of the meta robots tag with JavaScript can cause confusion. This includes changing a `noindex` to an `index` or adding a `nofollow` on the client-side. These attributes should be set in the initial HTML.

How can I check the rendered HTML for a ‘noindex’ tag?

You can use the ‘Inspect’ tool in Chrome DevTools. Right-click on the page and choose ‘Inspect.’ In the ‘Elements’ tab, search for ‘noindex’ to see if the tag exists in the final, rendered DOM. You can then compare this to the initial HTML by choosing ‘View Page Source.’

Are your JavaScripts sending mixed signals? Start your Creeper audit today to find and fix rendering issues.